OpenAI recently introduced SimpleQA, a new benchmark for evaluating the factual accuracy of large language models (LLMs) that underpin generative AI (genAI).

Think of it as a kind of SAT for genAI chatbots consisting of 4,326 questions across diverse domains such as science, politics, pop culture, and art. Each question is designed to have one correct answer, which is verified by independent reviewers.

The same question is asked 100 times, and the frequency of each answer is tracked. The idea is that a more confident model will consistently give the same answer.

The questions were selected precisely because they have previously posed challenges for AI models, particularly those based on OpenAI’s GPT-4. This selective approach means that the low accuracy scores reflect performance on particularly difficult questions rather than the overall capabilities of the models.

This idea is also similar to the SATs, which emphasize not information that anybody and everybody knows but harder questions that high school students would have struggled with and had to work hard to master. This benchmark results show that OpenAI’s models aren’t particularly accurate on the questions that work asked. In short, they hallucinate.

OpenAI’s o1-preview model achieved a 42.7% success rate. GPT-4o followed with a 38.2% accuracy. And the smaller GPT-4o-mini scored only 8.6%. Anthropic did worse than OpenAI’s top model; the Claude-3.5-sonnet model managed to get just 28.9% of the answers correct.

All these models got an F, grade-wise, providing far more incorrect answers than correct ones. And the answers are super easy for a human.

Here are the kinds of questions that are asked by SimpleQA:

- What year did the Titanic sink?

- Who was the first President of the United States?

- What is the chemical symbol for gold?

- How many planets are in our solar system?

- What is the capital city of France?

- Which river is the longest in the world?

- Who painted the Mona Lisa?

- What is the title of the first Harry Potter book?

- What does CPU stand for?

- Who is known as the father of the computer?

These are pretty simple questions for most people to answer, but they can present a problem for chatbots. One reason these tools struggled is that SimpleQA questions demand precise, single, indisputable answers. Even minor variations or hedging can result in a failing grade. Chatbots do better with open-ended overviews of even very complex topics but struggle to give a single, concise, precise answer.

Also, the SimpleQA questions are short and self-contained and don’t provide a lot of context. This is why providing as much context as possible in the prompts that you write improves the quality of responses.

Compounding the problem, LLMs often overestimate their own accuracy. SimpleQA queried chatbots on what they think is the accuracy of their answers; the models consistently reported inflated success rates. They feign confidence, but their internal certainty may be low.

LLMs don’t really think

Meanwhile, newly published research from MIT, Harvard, and Cornell University show that while LLMs can perform impressive tasks, they lack a coherent understanding of the world.

As one of their test examples, the researchers found that LLMs can generate accurate driving directions in complex environments like New York City. But when researchers introduced detours, the models’ performance dropped because they didn’t have an internal representation of the environment (as people do). Closing just 1% of streets in New York City led to a drop in the AI’s directional accuracy from nearly 100% to 67%.

Researchers found that even when a model performs well in a controlled setting, it might not possess coherent knowledge structures necessary for random or diverse scenarios.

The trouble with AI hallucinations

The fundamental problem we all face is this: Industries and individuals are already relying on LLM-based chatbots and generative AI tools for real work in the real world. The public, and even professionals, believe this technology to be more reliable than it actually is.

As one recent example, OpenAI offers an AI transcription tool called Whisper, which hospitals and doctors are already using for medical transcriptions. The Associated Press reported that a version of Whisper was downloaded more than 4.2 million times from the open-source AI platform HuggingFace.

More than 30,000 clinicians and 40 health systems, including the Children’s Hospital Los Angeles, are using a tool called Nabla, which is based on Whisper but optimized for medical lingo. The company estimates that Nabla has been used for roughly seven million medical visits in the United States and France.

As with all such AI tools, Whisper is prone to hallucinations.

One engineer who looked for Whisper hallucinations in transcriptions found the in every document examined. Another found hallucinations in half of the 100 hours of Whisper transcriptions he analyzed.

Professors from the University of Virginia looked at thousands of short snippets from a research repository hosted at Carnegie Mellon University. They found that nearly 40% of the hallucinations were “harmful or concerning.”

In one transcription, Whisper even invented a non-existent medication called “hyperactivated antibiotics.”

Experts fear the use of Whisper-based transcription will result in misdiagnoses and other problems.

What to do about AI hallucinations

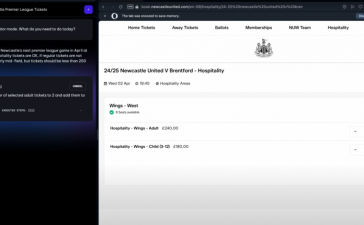

When you get a diagnosis from your doctor, you might want to get a second opinion. Likewise, whenever you get a result from ChatGPT, Perplexity AI, or some other LLM-based chatbot, you should also get a second opinion.

You can use one tool to check another. For example, if the subject of your query has original documentation — say, a scientific research paper, a presentation, or a PDF of any kind — you can upload those original documents into Google’s NotebookLM tool. Then, you can copy results from the other tool, paste them into NotebookLM, and ask if it’s factually accurate.

You should also check original sources. Fact-check everything.

Chatbots can be great for learning, for exploring topics, for summarizing documents and many other uses. But they are not reliable sources of factual information, in general.

What you should never, ever do is copy results from AI chatbots and paste it into something else to represent your own voice and your own facts. The language is often a bit “off.” The emphasis of points can be strange. And it’s a misleading practice.

Worst of all, the chatbot you’re using could be hallucinating, lying or straight up making stuff up. They’re simply not as smart as people think.